This is one of the most difficult traps to fix and short of being extremely unhelpful my best advice is to not create the issue in the first place.

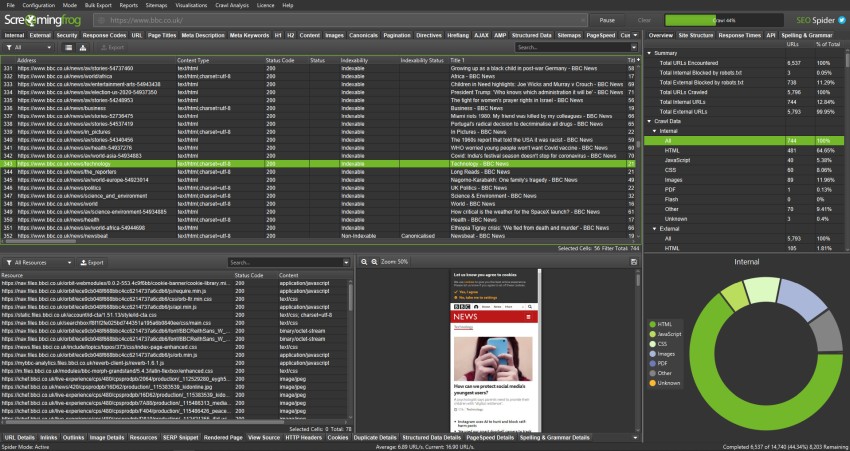

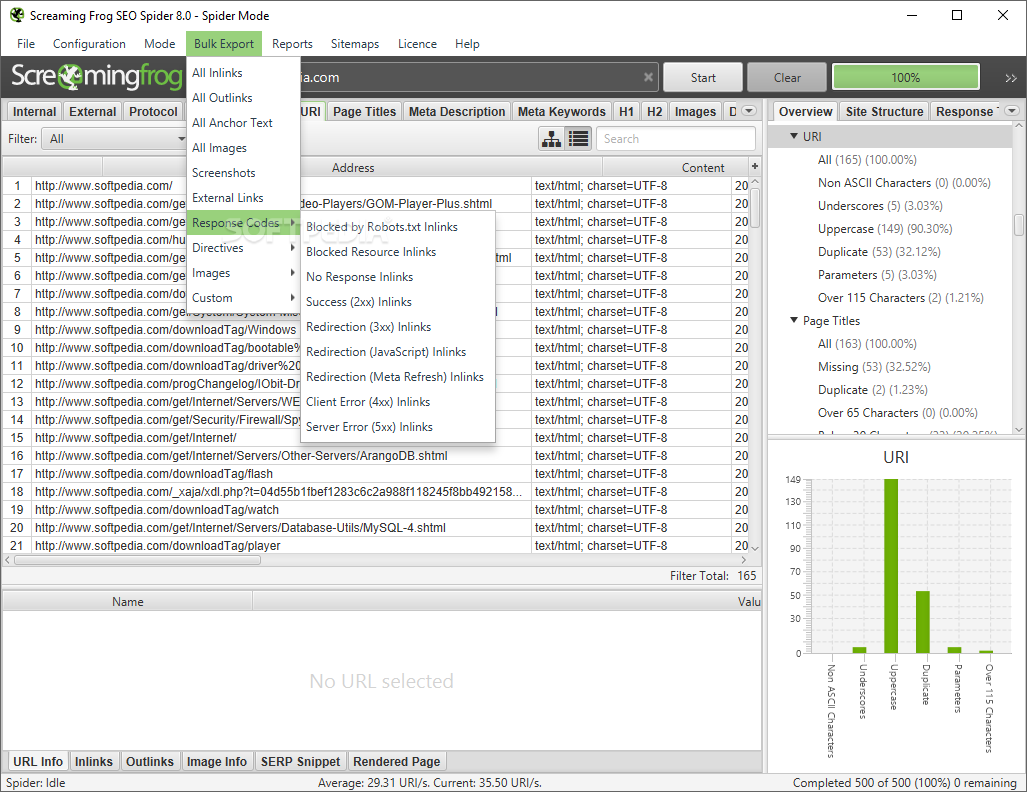

Notice how this spider trap has caused filtered pages to be indexed which could dilute the site’s ranking potential. A never-ending loop within a crawler tool is again a red flag highlighting that your site might not be configured to handle faceted navigation in an SEO-friendly manner. Look for elongated URL strings and various recurring filtering tags. The use of common filters such as color, size, price, or a number of products per page are some of the many tags that can create issues for a crawler. When it becomes evident to a spider that it is possible to mix, match and combine various filter types, it will be sent on an infinite, never-ending loop through a series of filters as a result of all the options available to it. This trap occurs when a site has a number of items that are sorted and filtered in a myriad of ways. Disallow the offending parameter within the robot.txt file or add server-side rules which ensure the URL string doesn’t exceed the maximum limit. If you are knowledgeable in programming, there is a technical solution to solving the issue. Following this, it’s important to sift through the source codes of the page in question looking for any further anomalies. After doing this, select the longest URL and you’ll find the root of the problem. Using the crawler tool used to locate the trap, set the functionality tool to sort by URL length.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed